Introduction

Building software that survives rapid growth requires more than just writing clean code; it demands a structural approach to complexity. Weak architectural decisions made early on often lead to unmaintainable systems, whereas robust architectures support expanding teams and reduce long-term costs. If you are looking to future-proof your infrastructure, understanding this is how to build scalable systems is the first step toward managing high throughput and strict availability requirements.

Scalable systems rely on specific building blocks working in harmony. Rather than a single monolith, these architectures distribute work across independent machines to appear as one coherent unit. Key components typically include:

- Load Distribution mechanisms like load balancers to manage incoming traffic

- Compute resources such as microservices or serverless functions for executing logic

- Data Storage solutions, ranging from SQL to NoSQL, for persistent management

- Messaging systems like queues for handling asynchronous communication

Success also depends on optimizing the developer experience. By establishing a "golden path" for deployment and investing in self-service infrastructure, teams can reduce cognitive load and eliminate bottlenecks. This strategic combination of technical components and efficient workflows ensures the system can handle increasing load without failing.

Scale With Reliable Hosting

Build a robust foundation for your scalable systems with Hostinger’s high-performance, cost-effective infrastructure.

Tip 1: Prioritize Quality Attributes Over Functional Requirements

Focusing solely on what a system does often leads to architectural failure. While functional requirements define the behavior, quality attributes like scalability, performance, and resilience determine long-term success. If you design only for functionality, the resulting architecture may crumble under load or fail to recover from outages. To truly understand this is how to build scalable systems, you must treat non-functional requirements as primary design constraints.

Prioritize characteristics that ensure system stability and growth. For instance, a biotech startup processing genomic data needs high throughput and low latency just as much as it needs specific analysis algorithms.

- Define specific metrics for performance, availability, and scalability before writing code.

- Validate architecture choices against these non-functional requirements rather than just user stories.

- Design for failure to ensure the system remains resilient even when individual components crash.

- Implement mechanisms for resource allocation to handle "pay as you go" scaling demands effectively.

Tip 2: Audit Developer Experience and Build a Golden Path

Efficient scaling relies heavily on the speed at which your team can deliver code. If developers lose time on environment setup, inconsistent tooling, or deployment confusion, system growth stalls. To understand this is how to build scalable systems, you must optimize the human element of engineering by reducing friction and cognitive load.

Create a standardized "golden path" that defines the correct way to create, deploy, and monitor a service. This approach makes the right workflow the path of least resistance, ensuring reliability and consistency across all services. You should also invest in self-service infrastructure so engineers can spin up environments, run tests, and deploy to staging without waiting for approvals.

- Audit bottlenecks: Identify where engineers lose time during local setup or production releases.

- Standardize tooling: Enforce a uniform stack for compute, storage, and networking to prevent configuration drift.

- Enable self-service: Automate provisioning so developers do not need to file tickets for basic resources.

- Measure cognitive load: Regularly survey the team to find out which processes are mentally taxing or overly complex.

Reducing manual overhead allows your architecture to scale alongside your business growth.

Tip 3: Invest in Self-Service Infrastructure

To understand this is how to build scalable systems, you must eliminate bottlenecks caused by manual operational tasks. Engineering teams often lose significant time to environment setup and deployment confusion. A scalable system requires a scalable team, which means developers must be able to provision resources and deploy code without waiting for administrative approval. By removing friction from the development lifecycle, you accelerate iteration and reduce cognitive load.

Implement a "golden path" that defines the standard way to create, deploy, and monitor services. This approach makes the correct workflow the path of least resistance, ensuring consistency across the organization. When engineers can spin up environments and run tests autonomously, they focus on building features rather than fighting tooling.

- Automate provisioning: Allow engineers to spin up staging and testing environments instantly through internal APIs.

- Standardize deployment: Create a unified pipeline for continuous integration and delivery to prevent configuration drift.

- Centralize documentation: Maintain clear, accessible guides for using the self-service tools.

- Reduce manual tickets: Ensure infrastructure access does not require filing support tickets or waiting for manual intervention.

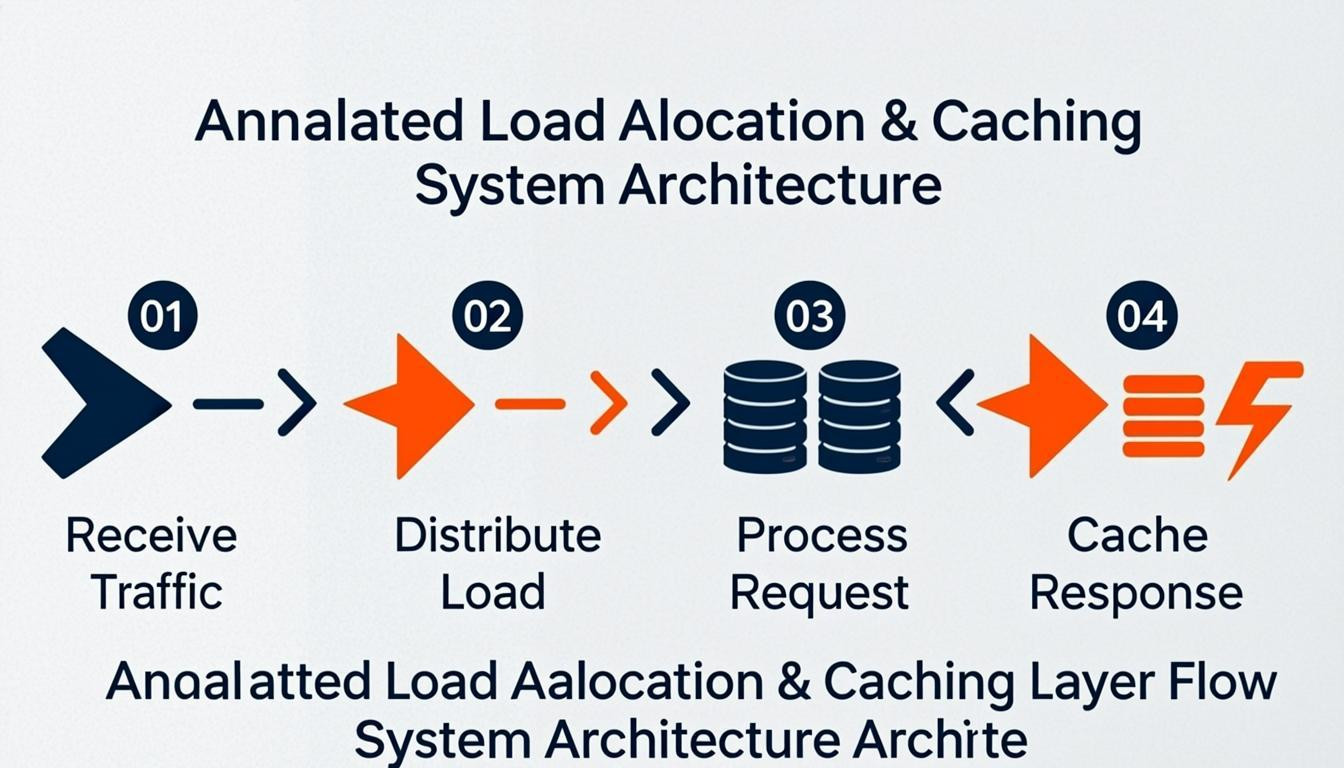

Tip 4: Implement Strategic Load Distribution and Caching Layers

Effectively managing traffic flow is essential when learning this is how to build scalable systems. Relying on a single server creates a single point of failure and inevitable bottlenecks. To prevent this, you must distribute incoming network traffic across multiple servers using load balancers. This ensures no single instance bears too much burden, maintaining high availability and responsiveness during traffic spikes.

Simultaneously, you should minimize direct database hits by implementing robust caching layers. Caching frequently accessed data in fast storage systems like Redis or utilizing Content Delivery Networks (CDNs) drastically reduces latency. This approach lightens the load on your backend infrastructure, allowing your application to handle higher concurrency without degrading performance. By combining traffic distribution with strategic data retrieval, you create a resilient architecture capable of scaling horizontally.

- Deploy load balancers to spread requests evenly across available compute resources

- Utilize in-memory data stores to cache expensive query results

- Implement CDNs to serve static content closer to the end-user geographically

- Separate read-heavy traffic from write-heavy traffic to optimize database performance

Tip 5: Choose the Right Database Scaling Strategy

Understanding when to scale vertically versus horizontally is essential for maintaining system performance. Vertical scaling involves upgrading a single server's resources, which is suitable for initial stages or predictable workloads. This approach allows teams to prioritize product development over complex distributed systems engineering. However, as demand grows, horizontal scaling becomes necessary to ensure long-term resilience and handle massive traffic loads across multiple nodes.

To build scalable systems effectively, implement a strategy based on your current growth stage. Use sharding to partition data across different servers, distributing the computational load and preventing bottlenecks. Integrating a distributed cache layer also drastically reduces backend strain by serving frequently accessed data quickly. Cloud-native database solutions often support both models but generally favor horizontal scaling for enterprise-level reliability.

Key takeaways for implementation include:

- Start vertical for simplicity if growth projections are manageable and linear.

- Shift to horizontal scaling when serving millions of users or requiring high availability.

- Implement sharding to split datasets and distribute workload efficiently.

- Utilize caching at the infrastructure level to minimize direct database hits.

Tip 6: Adopt Asynchronous Communication Patterns

Decoupling services through asynchronous communication is a fundamental method to manage high request throughput and strict availability requirements. In distributed systems, components must coordinate effectively without creating bottlenecks. By decoupling the sender from the receiver, systems handle traffic spikes gracefully and ensure that a failure in one component does not cascade to others. This approach allows independent machines to work together as a coherent system while processing background tasks efficiently.

To implement this pattern effectively, focus on message-oriented architectures that buffer requests and balance loads over time.

- Implement Message Queues: Use queues to decouple immediate request processing from background execution. This allows the system to absorb sudden traffic bursts and process tasks at a sustainable pace.

- Utilize Event Streams: For real-time data ingestion, employ event streaming to capture and distribute state changes. This supports complex data flows and ensures data consistency across services.

- Design for Idempotency: Ensure that processing the same message multiple times does not result in errors or data corruption, which is crucial for reliability in distributed environments.

Adopting these strategies is this is how to build scalable systems that remain responsive under heavy load.

Tip 7: Enforce Rate Limiting and Consistent Hashing

To maintain stability as traffic grows, you must control request volume and distribute data effectively. This is how to build scalable systems that remain reliable under stress. Rate limiting prevents any single client from overwhelming your resources, protecting services from abuse and ensuring fair availability for all users. Simultaneously, consistent hashing allows for efficient data distribution across multiple servers, minimizing the impact of adding or removing nodes from a cache or database cluster.

Implement these strategies to optimize architecture performance:

- Set granular rate limits: Define thresholds based on API endpoints or user tiers rather than a global limit to prevent bottlenecks.

- Use token buckets or leaky buckets: Apply these algorithms to smooth out bursts of traffic and maintain a steady flow of requests.

- Distribute keys uniformly: Utilize consistent hashing to allocate data to specific nodes, ensuring that even if a server fails, only a fraction of the keys are remapped.

- Monitor throttled requests: Track the frequency of rate limit errors to fine-tune your thresholds and identify potential abuse patterns early.

Conclusion

Building robust infrastructure requires mastering core components like load balancers, caching layers, and messaging queues. A system becomes difficult to maintain not simply due to size, but because of weak architectural decisions made early on. Strong architecture scales with teams, survives developer turnover, and reduces bugs over time. Implementing mechanisms like rate limiting and consistent hashing is vital for protecting services and maintaining availability as traffic grows.

To succeed, teams must move beyond theory and practical application. Audit your current developer experience to identify bottlenecks in environment setup or deployment. Create a "golden path" that defines the standard way to create and monitor services, ensuring it is the path of least resistance. Investing in self-service infrastructure allows engineers to spin up environments without waiting for approvals. This is how to build scalable systems that remain coherent and efficient across distributed networks. Start refining your architecture today to ensure your platform handles future growth with ease.

Comments

0