Introduction

Getting a handle on what is duplicate content is crucial because it directly affects how well your website ranks in search results. When search engines run into identical or strikingly similar content across multiple URLs, they have to make a choice: which version should they show to users? If the algorithm picks the wrong page, or if the "link equity" gets watered down across several versions, none of those pages might perform as well as they should. This often leads to reduced organic traffic and lower visibility for your most important pages.

To define the problem, we have to look at how search engines interpret repeated content. It isn't always a case of malicious copying; often, duplicate content happens accidentally due to technical configurations. Search engines want to filter out duplicates to give users a diverse and valuable experience, but they don't always penalize the site. Instead, they might just struggle to index the correct version.

Common examples of duplicate content include:

- URL parameters used for tracking or sorting

- Printer-friendly versions of web pages

- HTTP vs. HTTPS versions of the same site

- Scraped or syndicated content

Addressing these issues ensures that search engines can easily crawl, index, and attribute value to the canonical version of your content.

Eliminate Duplicate Content Fast

Run a complete Site Audit with Semrush to quickly detect and fix duplicate content issues that hurt your rankings.

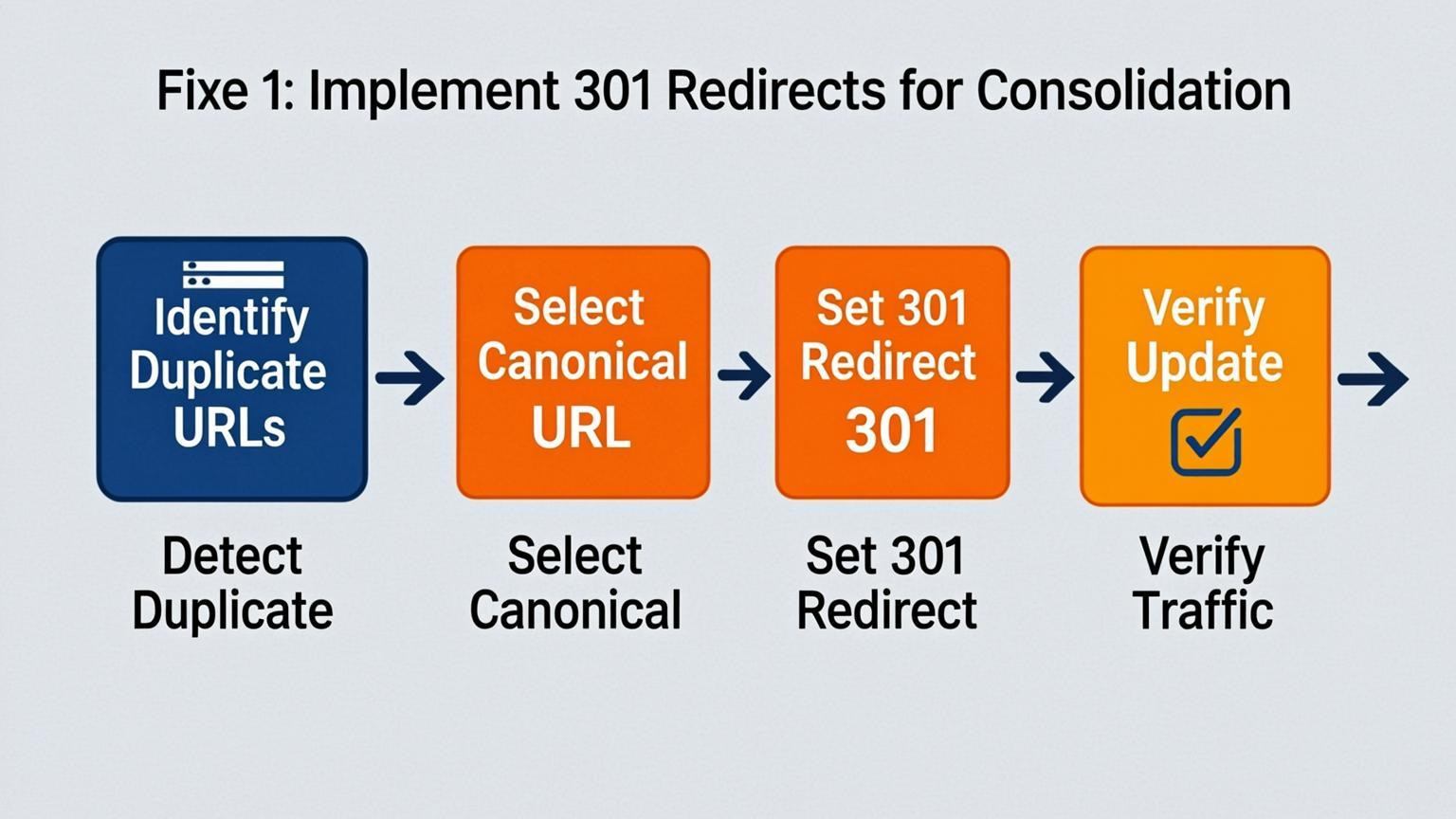

Fixe 1: Implement 301 Redirects for Consolidation

Truly understanding what is duplicate content means recognizing that search engines have a hard time identifying which version of a URL is the authoritative one when multiple pages host the exact same information. To fix this without losing valuable historical data, you can implement 301 redirects. This permanent redirect strategy funnels traffic and link equity from redundant or outdated pages toward a single, canonical version.

Start by auditing your site to find pages with significant content overlap, such as product variations, landing pages for expired campaigns, or HTTP versions of HTTPS pages. Choose the strongest page—usually the one with the most backlinks or traffic—as the target destination.

- Identify duplicates: Use site crawlers to find pages with duplicate meta tags or body content.

- Select the canonical URL: Designate the primary page you want search engines to index.

- Apply the redirect: Update your server configuration or CMS to redirect old URLs to the chosen canonical page.

For example, if `example.com/shoes/blue` and `example.com/shoes/blue-size-10` show the same product details, redirect the specific size page to the general product page. This consolidates ranking signals and ensures users always land on the most relevant content.

Fixe 2: Utilize the Canonical Tag Effectively

When answering the question of what is duplicate content, it is vital to understand how to manage similar pages without losing search equity. The canonical tag acts as a strong hint for search engines, specifying which URL is the "master" copy when multiple versions exist. This consolidates indexing signals, ensuring that backlinks and ranking authority point to the preferred page rather than being diluted across variations.

To put this into action, add a `rel="canonical"` link element in the `` section of your HTML code. For instance, if `https://example.com/page` is your preferred version, and `https://example.com/page?sort=asc` is a duplicate, the duplicate page should include:

``` ```

Follow these steps for proper application:

- Self-referencing canonicals: Add the tag to the preferred page pointing to itself to prevent parameter abuse.

- Absolute URLs: Always use the full, absolute path in the `href` attribute.

- Consistent content: Ensure the canonical page closely matches the content of the duplicating page to avoid the tag being ignored.

Fixe 3: Master Parameter Handling in Google Search Console

Dynamic URL parameters often cause search engines to index multiple versions of the same page, creating issues when users ask what is duplicate content. Common culprits include tracking codes for marketing campaigns or sorting options for e-commerce categories. If Googlebot spends its crawl budget on these infinite variations, it might miss your important content.

To resolve this, configure the URL Parameters tool in Google Search Console to instruct Google on how to handle specific query strings. You should identify parameters that do not change the core content of the page and tell Google to ignore them.

- Identify the culprit: Look for parameters like `?utm_source=`, `?sessionid=`, or `?sort=price`.

- Select the setting: Choose "Yes" if the parameter changes page content (like a product filter), or "No/Doesn't affect page content" for tracking IDs.

- Choose the action: For duplicates, select "Representative URL" and decide whether Google should crawl the URLs or skip them to save resources.

Fixe 4: Minimize Boilerplate Repetition

Understanding what is duplicate content involves recognizing that even standard website elements can dilute your SEO value. Boilerplate repetition occurs when large blocks of text, such as lengthy copyright notices, extensive site-wide footer links, or repetitive navigation menus, appear on every page. When this non-unique text overwhelms the actual page content, search engines may struggle to differentiate your pages, potentially leading to indexing issues.

To implement this fix, condense your repeated elements and ensure the primary content on each page is significantly longer than the shared template text.

Implementation steps:

- Audit footers and sidebars: Remove unnecessary legal jargon or excessive lists of links from these areas.

- Shorten headers: Keep global navigation menus concise and descriptive.

- Use structured data: Instead of repeating address details in text on every location page, use schema markup to help search engines understand the data without adding bulk to the visible content.

- Focus on ratio: Aim for unique content to make up at least 50% of the visible page text to distinguish individual pages clearly.

Fixe 5: Curate External Syndication Carefully

Republishing content on third-party platforms can expand reach, yet it directly answers the question of what is duplicate content by creating identical copies across the web. To protect your search rankings, ensure syndication partners implement a canonical link pointing back to the original URL on your domain. This tag signals to search engines which version is the authoritative source, preventing dilution of link equity and ranking potential.

Implementation requires strict control over permissions and technical attributes. Before allowing republication, verify the partner's ability to add specific HTML elements to the page header.

- Require a Rel=Canonical Tag: The partner must include `` in their code.

- Request Meta Noindex Tags: For lower-tier sites, ask for a noindex directive to keep the syndicated copy out of search indexes entirely.

- Include Backlinks: Ensure the content contains keyword-rich anchor text links pointing to your original article.

For example, if a news outlet republishes your industry report, their page should acknowledge your domain as the primary source. Without these measures, search engines may struggle to identify the originator, potentially ranking the syndicator above you.

Conclusion

Understanding what is duplicate content is the first step toward maintaining a healthy website architecture and avoiding potential search engine penalties. While unintentional duplication often occurs due to URL parameters or CMS quirks, resolving these issues consolidates page authority and ensures the most relevant version ranks. Key takeaways for fixing these problems include:

- Implementing 301 redirects to point users and crawlers from duplicate pages to the canonical version.

- Using the rel="canonical" tag to signal the preferred URL when multiple similar pages must exist.

- Employing meta noindex tags to prevent non-essential pages, such as print versions, from being indexed.

Beyond immediate fixes, proactive monitoring is essential for long-term ranking stability. Regular site audits help identify new duplication caused by content scraping or site structure changes before they impact performance. Establishing a consistent internal linking strategy further reinforces the correct page hierarchy. By continuously managing these elements, webmasters can preserve their site's integrity and sustain organic visibility.

Comments

0